Hi!

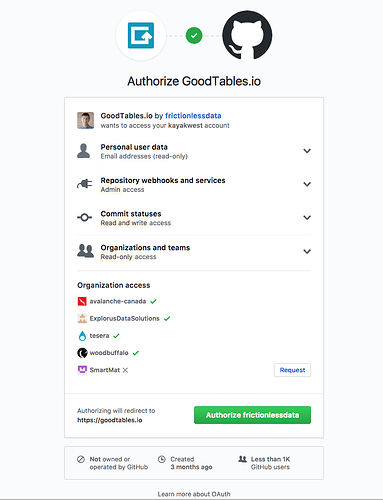

I’m trying to use goodtables.io to add continuous data validation to the publicbodies project. The repository has a single datapackage.json file that points to several CSV files described using tabular data packages. Since all the files use the same schema, I’ve used a single file for the schema and referenced it in the datapackage.json file as described in the specs and discussed on this topic.

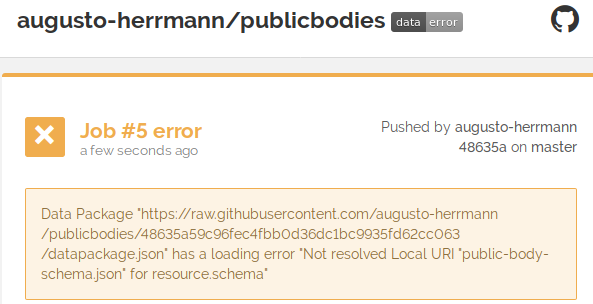

So far I’ve added the repository and get a validation error telling me it wasn’t able to resolve the reference to the table schema file, as below:

However, the command line goodtables tool is able to parse the referenced table schema just fine.

Here’s a sample:

$ goodtables datapackage.json

DATASET

=======

{'error-count': 483,

'preset': 'nested',

'table-count': 11,

'time': 14.937,

'valid': False}

TABLE [1]

=========

{'datapackage': 'datapackage.json',

'error-count': 0,

'format': 'inline',

'headers': ['id',

'name',

'abbreviation',

'other_names',

'description',

'classification',

'parent_id',

'founding_date',

'dissolution_date',

'image',

'url',

'jurisdiction_code',

'email',

'address',

'contact',

'tags',

'source_url'],

'row-count': 259,

'schema': 'table-schema',

'source': '/home/herrmann/dev/publicbodies/src/data/br.csv',

'time': 1.724,

'valid': True}

It then goes on to errors that actually are find in the other tables. The point is that the command line tool correctly interprets and uses the external reference for the table schema, but the online tool on goodtables.io does not. Shouldn’t they be consistent? Doesn’t it use the same tool on the backend?